We felt guilt. Wracked with pain.

It’s the mid 1990s, and computers are impossibly hard to use.

Anyone who could program them, and who also cared about people, was ashamed.

So we fixed it.

Books were published, websites launched, a new profession was born.

It took a while but many of the key insights from that furore benefit us all every day.

For example, on an iPad, or in Google Docs, you don’t need to remember to press save to not lose your work.

It’s now impossible to start a new Internet company without its proposition being clear and explicable to the general reader, with a control flow that leads anyone through to a happy conclusion.

The most important revolution in the technology of information is now accessible to billions.

It’s every other industry that sucks.

Three examples…

Music – I live in Liverpool, which I suspect has an interesting, thriving music scene. But it is completely impenetrable.

Look at the flyer to the left (click for larger version). If you haven’t heard of Wretch 32 or Spank Rock, it tells you nothing.

What genre is the music? How varied is it? Might I like it? How much does it cost? What is that weird fuzzy blob in the top right, and the strange # sign? What’s an early bird?

If it was a computer startup it would be held to an even higher standard – you’d have to assume the reader didn’t even know what a “festival” was.

And this is one of the good examples.

Partly I think it is in cliques wanting to keep people out as they don’t have room in their venues. At least, that is how I feel as an outsider. Just like normal people felt about computers in the 1990s.

Every time I walk past a wall of such flyers, I gaze longingly and mystified. With no time, with no usability, I move on.

Another starving musician loses access to my discretionary spending.

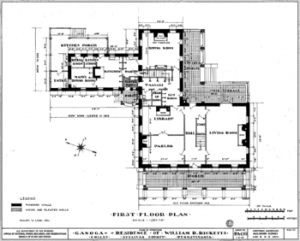

House buying –  Diagrams such as this are complex, but actually make it look relatively straightforward.

Diagrams such as this are complex, but actually make it look relatively straightforward.

The process, especially in the UK, is insane.

The incentives are distorted, customer service low, paperwork excessive. And that’s even when you’re paying a decent lawyer.

Let’s just say, Steve Jobs hasn’t had any influence over the design of the property purchase system.

There are obvious improvements Government could make, but they screwed them up.

Estate Agents are locked into old business models, and seemingly don’t care.

Sellers stubbornly refuse to drop prices to actually sell their property, as if the market was being fixed by an evil demon, rather than natural laws of supply and demand.

Buyers get the information they need at the wrong time, forcing them to unnecessarily renegotiate or drop out.

Mortgage companies require copies of byzantine sequences of documents, with no logic, sense or humanity behind it.

And heck, even the usable alternative of rental is unusable, as there are inadequate rights by social convention (no pets, no painting, no security of tenure…), and not even a decent system where the community of renters can praise good or shame bad landlords.

In short, it is crapper than even a 1990s computer by far.

Government – You can probably all think of a recent occasion when you found part of Government excessively hard to use!

Government – You can probably all think of a recent occasion when you found part of Government excessively hard to use!

Benefits and tax systems that are so complex, so time wasting you can’t optimise them, unless you are weak enough that your local council pays someone to work it all out for you, or strong enough you can afford tax havens.

Democratic systems so unresponsive, so unaccountable, voting not only seems pointless, but is pointless.

At mySociety, we were obsessed with usability from the early days. A significant part of its purpose is to spread “usability” to Government services, by involuntarily making them more usable.

Nowdays, parts of Government are valiantly trying to fix such problems, but even that has come from the computer geeks. At best though, it’ll be lipstick (really lovely lipstick! that will show the way! but still lipstick) on a pig.

Where are the people on the inside radically revamping services throughout their supply chain to be awesome? Fixing the “whole product” of Government.

I’m sure they’re there, but I’m also sure there aren’t enough of them.

(Next time all this annoys you, take positive action by slinging mySociety a donation, they’re taking action on it at all sorts of levels, not just in the UK but internationally these days too).

So yeah, enough guilt from us geeks.

When’s the rest of society going to step up, take responsibility for their parts, and make everything usable?

Thinking about it, there are quite a few areas that are very usable, they’re just largely invisible.

For example, the actual *supply* of electricity and water are excellent, commoditised, reliable, complex, but with a very simple user experience.

(Billing is another matter!)

Still, plenty of places that need work.

Transit also has a horrible UX. Despite training, it seems that most drivers don’t know what many signs mean, and just watch what happens at a traffic circle in America. Seems like the geeks need to mobilize and change other areas too, since “the rest of society” may not be up to the challenge.

Re music: Record shops are a terrible experience. Tens of thousands of CDs, each labelled with artist name, album name and some songs. It’s only when you go somewhere like Rough Trade, where each CD has a little blurb, that you realise what you’re missing. Book publishers realised the value of a good blurb decades ago.

It’s a question of the media you’re working in. Making websites usable is easy because you’re working with code. Making better healthcare is difficult because you’re working with people and silos and all the rest of it.

Service design has been one of the big trends of 2011 but the application of ideas we geeks have learned is restricted by their lack of applicability in other domains.

thrulfo – haven’t spotted the service design trend. Sounds great! Examples?

So true! Those IRS forms are horrible! Why should I have to pay someone to do my taxes for me every year? I don’t even have complicated taxes.

I think the recent surge in new startups is proof that there is a lot of opportunity out there for making things simple.

@francis:

http://www.livework.co.uk/

http://servicedesigntools.org/

http://mostcontagious.com/

http://thruflo.com/post/997940379/why-ad-agencies-are-no-longer-in-charge

It’s a nice idea, but it doesn’t wash.

I’d say the truth is that computer people are still bad at usability, and so are lots of other industries. The recent mid-1990s timeline gives it away.

Far more indicative is how long it took for mouse-and-icon to get out of the Xerox lab that created it, how many decades it took to grasp the idea of automatically saving what’s been inputted (duh…), and how incomprehensible software guides and user manuals still are.

The reality is that there are dozens of industries which all but ignore the actual end user, and despite a thin veneer of user-friendliness, IT is still definitely one of them.

Only an IT person could seriously imagine the usability barrier is anywhere near being removed. This was always the problem – not asking the actual users. They’re the people who could easily tell any IT optimist how opaque 95% of it all still is – if he bothered to ask….

The point about a lack of ‘a decent system where the community of renters can praise good or shame bad landlords’ is a particular gripe of mine that I nearly had a swing at.

Consumer Focus proposed this a year ago (http://www.consumerfocus.org.uk/files/2011/01/Opening-the-door.pdf ) which was widely reported but little has emerged since then. This is probably because landlords, letting agents and the government have no motivation to set it up and there’s not an obvious commercial payoff.

Still, I’d love to see it.

Isn’t ironic, that the page of the UPA (http://www.upassoc.org/) is a little bit crappy?

Mark, fair point!

Watching my Mum try to set up a new iPad (which I had thought would be easy!) this Christmas made me realise just how bad even the best of IT is.