The topic of how to make our information society safe and fair regularly comes up in conversations.

I think we need some quite big, radical things. They’ll need new public service Internet organisations to implement.

This is my high level view list.

1. Access to culture

“People have too much knowledge already: it was much easier to manage them twenty years ago; the more education people get the more difficult they are to manage.” (one MP’s response to the Public Libraries Act 1850)

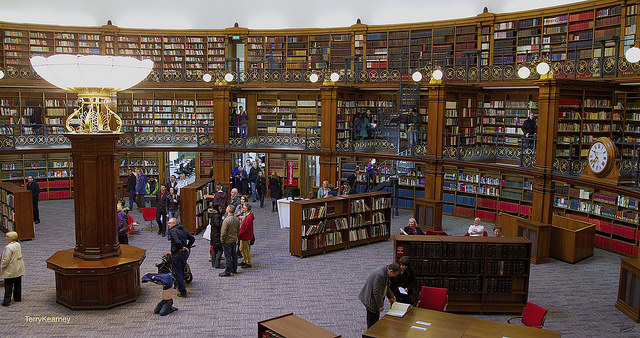

The printing press wasn’t a fair innovation until centuries later, when Victorians made the public library widespread. Some of the Internet is free at the point of use, due to advertising and due to the free culture movement. Some of it isn’t.

The printing press wasn’t a fair innovation until centuries later, when Victorians made the public library widespread. Some of the Internet is free at the point of use, due to advertising and due to the free culture movement. Some of it isn’t.

Much of the most detailed, intelligent reporting - such as the Financial Times and the Economist - is not free. Much vital culture - such as the Wire - is not free.

There are numerous business publications and research organisations, which aren’t free.

I think we’ll need a method, similar in purpose to public libraries, which gives poor teenagers access to the same culture as rich ones. Which gives someone with few resources starting a new business access to the same data as a rich corporation.

Where to start: Build on existing physical libraries - make sure they have subscriptions to paid for aspects of research and culture. Build on free culture. Imaginative ideas for new funding methods.

2. Digital literacy

Many technology innovations only reach their best when complemented with mass education.

We teach nearly everyone in the UK to read and write. A majority learn to drive. Without that quite complex training, the printing press and the car would be just for an elite.

What could everyone learn which would help us make better use of computers? Usability, which I love, will only get us so far. Until we have strong Artificial Intelligence (it’ll be a while), many people in society will need to understand computers well.

There are still plenty of technology changes to come, and we can’t know exactly what to teach until after they’ve happened. Meanwhile, we can make a start, and iterate as we learn more.

Where to start: Teach children to code. Make sure employees can use spreadsheets in a sophisticated way. Even teach police to type! (Richard Pope’s idea after seeing a desk sergeant who was very slow at entering his paperwork)

3. Professional programming

Engineers don’t build bridges that fall down, not any more. Software is constantly broken, our data stolen and our privacy breached.

There’s a whole lot of it which needs rebuilding in new ways which are barely researched yet. The industry needs to be professional to do this.

Ethical policies which help defend privacy. Quality policies which make it secure. Diversity policies which makes it usable for everyone… There are lots of things a professional programming organisation could improve.

I’ve benefited a lot from the accessibility of programming - I learnt as a hobbyist child from my father. We can make programming both accessible, and professional.

Where to start: Look at other engineers. Look at other professions, like doctors and lawyers. Join and improve ethical professional bodies. Consciously try to not harm the freedom which general access to programming gives in the process. Create standards.

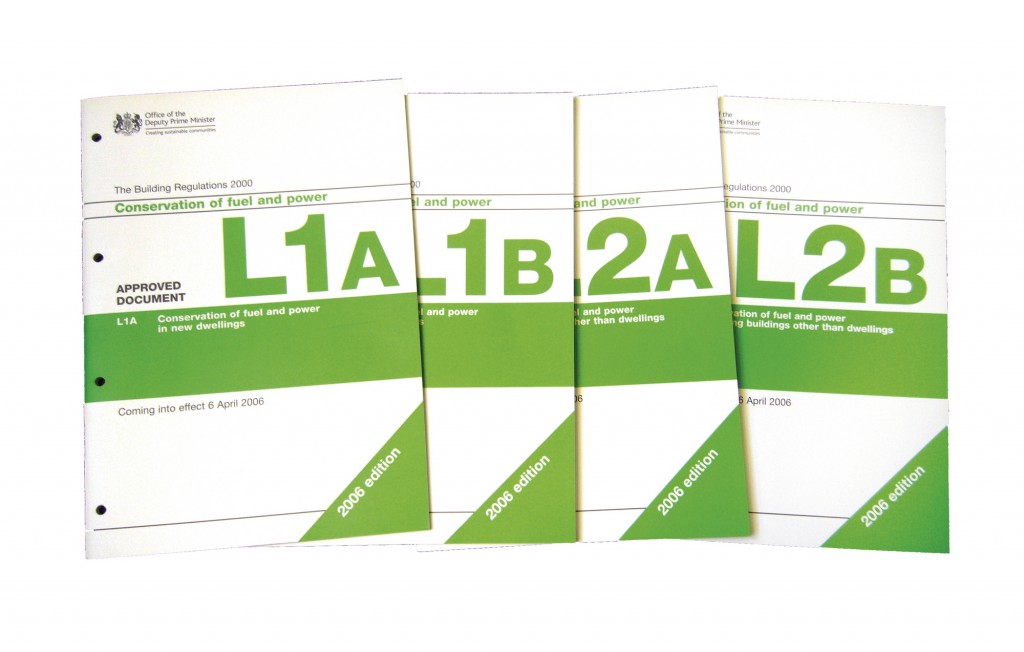

4. Building regulations

Increasing skills is important, but won’t be thorough enough. We need to enforce standards by law too.

Some of these will be mundane but vital, such as websites using only a few standard sets of terms and conditions. Others will be life saving, such as making sure your driverless car manufacturer has a high standard of software engineering practice.

Where to start: Regulate to stop coding in unsafe languages. Support organisations like I Am The Cavalry (automotive software safety). Build on industry guidelines until they are mandatory (e.g. MISRA).

5. Ethical cryptography

It would be foolish to use no encryption, allowing Governments and criminals to spy on everything we do. And completely unethical.

It is just as wrong to hope that all things will be encrypted in a libertarian utopia. There are criminals and enemies who courts should be able to get evidence from.

This blog post by Vinay Gupta describes the three actors which cryptography should model - the users, the Government and criminals. It describes a much more sophisticated threat model than we tend to talk about.

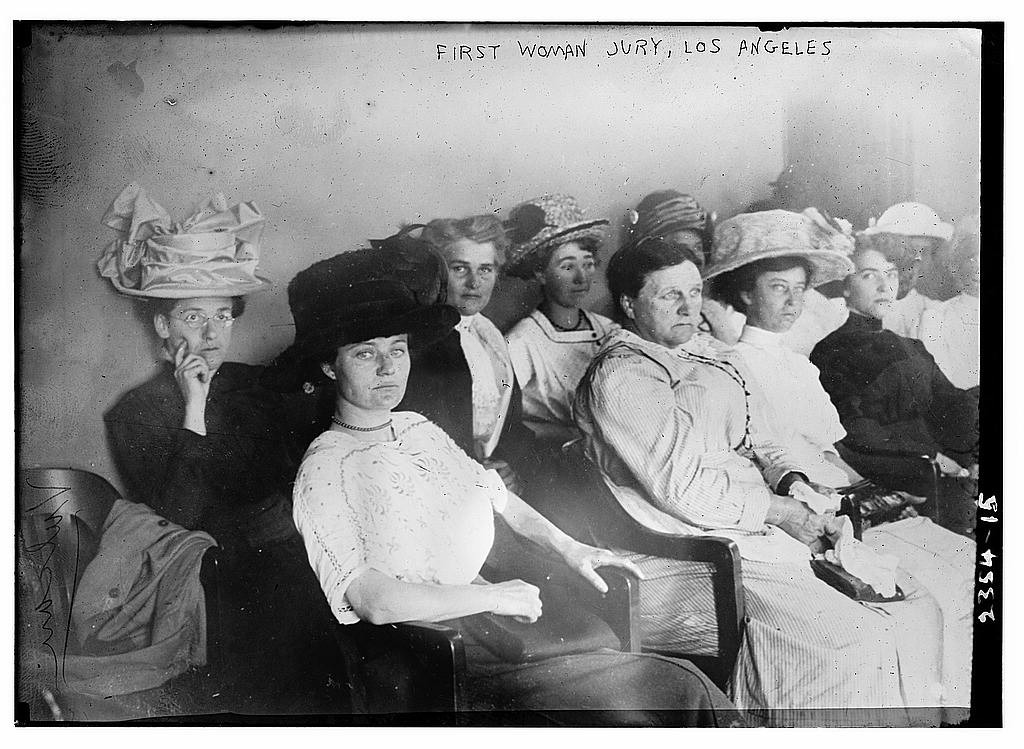

Where to start: Implement ideas like Cheap ID and jury-based crypto. Develop capability to practice a hybrid of law and tech. Use that to develop technical / legal systems similar to constitutions.

6. Power framework

There’s a war over who has access to the core ability of computers - to program them in arbitrary ways.

How is what computers do controlled?

To understand the issue, read Cory Doctorow’s article The Coming Civil War over General Purpose Computing. It’s a very complex question.

Where to start: Build on organisations like the Free Software Foundation, Electronic Frontier Foundation and Open Rights Group. Academic research which combines the abstract philosophy of delegated agency with practical user needs.

Conclusion

What do you think needs doing to make our new information society safe and fair? Leave a comment!

I expected to nod along, but #3 and #4 are surprising to me. I see software as more an art than an engineering practice. Regulating how things are built is incredibly hard to do without restricting the creative impulse. I believe it's doable when it matters (eg, remote control of cars), but should be approached very cautiously; where's the line between "little experiment" and "needs regulation"? What happens if your little experiment is suddenly popular? How do you encourage small, creative groups to build new software in regulated areas? Does it actually work? Compared to bridges and buildings, software is stunningly complex (and most of the opsec is managed on the "op" side).

#5, too - we need to have a much deeper debate about this as a society. Lots of crime would be a lot easier to prosecute if we had a camera in every home, or on every person. Both feasible today. So, why not? And if not, why is crypto up for debate?

It's still so early. We don't know what the possibility space is, let alone where the real failure points are. Enshrining law now, in the context of regulators who are so uninformed that they honestly think they can "ban encryption" would leave us all worse off.

It seems to me that on the balance, encouraging those building new tech systems (and I don't mean the programmers) to create systems that improve safety and fairness across society is still very much needed.

Thanks for the thoughtful and interesting response!

Software is both art and engineering. I think we can make it safer now without blocking creativity (e.g. memory safe languages only). Then more later when we know more...

We want a return to the pre Web2.0 norm of personal control over personal data. It's legally a more sound basis for data sharing and consent, better for personalisation and preferences, makes data more valuable (eg when linked to true intentions such as a major purchase) more efficient in many circumstances and generally less Kafkaesque and weird. We can start to implement it today. New fortunes await the next wave of innovators and entrepreneurs building new health, fincnae, shopping, travel and culture apps and services based on properly persmissioned flows of personal data.

It belongs in your core principles I think.

Where to start = rearchitect services to connect to standard personal data stores. Organisations: issue and in turn stand by to rely on reusable verified attributes: digital proofs of status, relationships (eg this is Francis' verified address, degree, driving licence etc).

Love it, and I hadn't covered it in my six points. Great suggestions where to start too.

I don't disagree with these, Francis, but would maybe (because I have increasingly tended to come at the problem from the campaigning end of things?) take a tougher - or at least different - line on some of them.

I'm glad your #1 was access to (use of?) culture, and your #2 literacy. Both essential. No point arguing chicken and egg, but I fear you have to be more radical yet if you're relying on the public libraries to 'save us'.

What I - and others, but possibly most articulately @billt - think we need is a genuine 'Digital *Public* Space'. This is difficult to unpack, but (for me) lies somewhere around the notions of public parks, public libraries, public service broadcasting and pop-up art spaces. What most people think of as 'public' these days is nothing of the sort; this is becoming as true off-line as on.

Key to truly public is truly anonymous.

So, while I agree with #5, I believe 'fair and equal' requires the ability to join the network anonymously - though, of course, to be able to provide trustworthy bona fides when/if justifiably challenged. This requires a radical rethink of the network, which is why redecentralisation caught my attention when I first saw you mention it.

I'm pretty hard core on 'literacy'. I was training as a teacher as the current National Curriculum was being issued, and had a go at what briefly became known as the BBC's 'Digital Curriculum' in the late 90s/early 2000s - but what we have in schools and more generally these days is woefully inadequate.

Media Studies used to be a 'joke' subject; these days, I'm half convinced a radically-improved version of it should be a core subject or key component of every subject.

I now know several people from their 20s to mid-30s who were failed utterly by school, who are functionally illiterate when it comes to the written word, but who over the last 5-7 years have educated themselves on YouTube (or equivalent, but mainly YouTube). For free. They are interested/engaged, interesting to talk to and coherent - but it cost them a LOT of effort. What they lack is a map.

Maps are hard.

Search is easy. Search makes you think you know stuff you actually don't - because if you can't even identify the context you borrowed for the information, you don't know what you 'know', and what you don't.

Maps distill a bunch of stuff that helps people find their way around; to get a sense of what they know, and what they don't. It's entirely possible - if costly - to make (good) maps but we should do MUCH more of that, and publish them for free.

A person with a map can make all sorts of choices they otherwise wouldn't know were there. People with maps tend to be freer / more autonomous than those without them...

(N.B. Better maps may also help other initiatives, such as 'open' - which is flailing around a lot at present, trying to find how it relates to principles and disciplines it barely appreciates and a landscape it hasn't even really begun to explore.)

A lot of the (digital) learning and 'literacy' I see is misdirected at activities that aren't fit for purpose; teaching people to drive software - rather than to build it themselves, or to be able to fix it, or at the very least to be able to appreciate the good, bad, ugly and dangerous parts.

#3 shows you appreciate that coders will always be an elite, so you clearly appreciate that teaching everyone to code isn't the answer. I think the (mass) answer will ultimately be somewhere on the 'aesthetic' rather than the technical end of things - 'play' vs 'study'; educating people to at a minimum be able identify code/data products and services that safely meet their needs and desires.

Professionalising programming is, I fear, a more-than-generational problem.

I know folks at BCS and others are trying to think about this. I've spoken to several members of the Worshipful Company of Information Technologists(!) over the years - at least one a University Vice-Chancellor - and no-one who takes this seriously doubts that this is huge.

Take psychology as an analogy; a practice most people would recognise as some sort of scientific discipline. As a professional practitioner, you can be a Chartered Psychologist or member of one of the established Psychological or broader Scientific Institutions or (Royal) Colleges. You can study a bunch of internationally-recognised courses in established Universities to get a bunch of letters after your name.

This has been true across the world for quite a while and while it doesn't stop, e.g. Scientology or NLP 'life coaches' continuing to abuse psychological techniques for money, or didn't stop Nazis doing appalling experiments in WWII, it does tend to mean that people who do such things are sanctioned to the extent that a professional community can do so, i.e. various forms of marginalisation / exclusion or removal of official approval.

And this has taken about 100 years.

Ethics in psychology 'borrow from' general research and medical ethics; programming has no such 'base' to work from, but - as we've seen with care.data and NHS handling of medical information more generally - research and even medical ethics can be applied, when what you're doing affects people (which, by definition, personal data does).

Of course, while people like @RossJAnderson build conversations between the psychologists and security engineers, Number 10 reads an interview with Malcolm Gladwell and builds itself a 'nudge unit' which a couple of years later privatises itself...

So I agree with #3, but would (pragmatically) prefer to encourage a feeling of 'chivalry' amongst the taught and self-taught for now - rather than put too much effort into creating yet another 'priesthood'.

I do think you're right about standards and ethics and professionalism. I just don't think things will settle enough for several decades or more for anything other than a handful of highly dedicated people to keep steering things as best they can from the handful of international and international bodies that haven't been corrupted or co-opted.

Revolutions aren't the times to build institutions; they're the times we discover (and defend) what our REAL values are - or what we want them to be.

The only one I instinctively disagree with is #4. Do we want to trigger another 'Elf and Safety culture? Bad enough that Data Protection in the UK and elsewhere seems to have gone that way (it's always a stupid idea to separate legal compliance from fundamental human rights).

Let's leave laws for discernable crimes and transgressions, and be much clearer about (and stick to!) the underlying principles. Giving people handbooks makes them stupid - cf. the standards-compliant British e-passport, the chip in which we were able to do pretty much everything with that the Home Office said we couldn't. Because (a) we actually read the standards, and (b) we understood them.

I care a lot about #crypto and #control, but I confess I rely on trusted others' deeper knowledge to guide me. I'll really miss @CasparBowden for that, and e.g. recently @richietynan's take on the destruction of the Guardian laptops https://firstlook.org/theintercept/2015/08/26/way-gchq-obliterated-guardians-laptops-revealed-intended/ gave me even more serious pause for thought.

As I said above, I think (initial) anonymity is key. But what does this even mean if at a hardware/firmware level you can't even guarantee your keypad and its invisible 2Mbit of storage isn't a keylogger for the Chinese Central Communist Party?

Thanks for provoking what I hope wasn't too verbose a response. I'll cross-post to my blog, just in case this doesn't upload.

Intrigued by these new public spaces, and love the educating people in mental maps idea.

I think we can borrow a lot of our ethics for professional programmers from other engineers.

Internet of things, and digitised finance, make me think we will need regulations, despite their flaws and problems.

Not too verbose at all, thanks for sharing so many interesting thoughts!

"#3 shows you appreciate that coders will always be an elite" ? this is the same mistake as "writers will always be an elite": true, but doesn't mean that you shouldn't teach everyone to write.